I had a rule in my control file: never run database migrations without explicit approval. The agent followed it perfectly - until it didn’t. During a long debugging session, it decided the schema was the root cause, wrote a forty-line inline Python script, connected directly to the database, and altered the table. It never “ran a migration.” It just spoke SQL through a different channel. The table was altered, the script was gone, and my harness log showed “executed python command.”

[Read More]"It's Just a Skill File" (Famous Last Words)

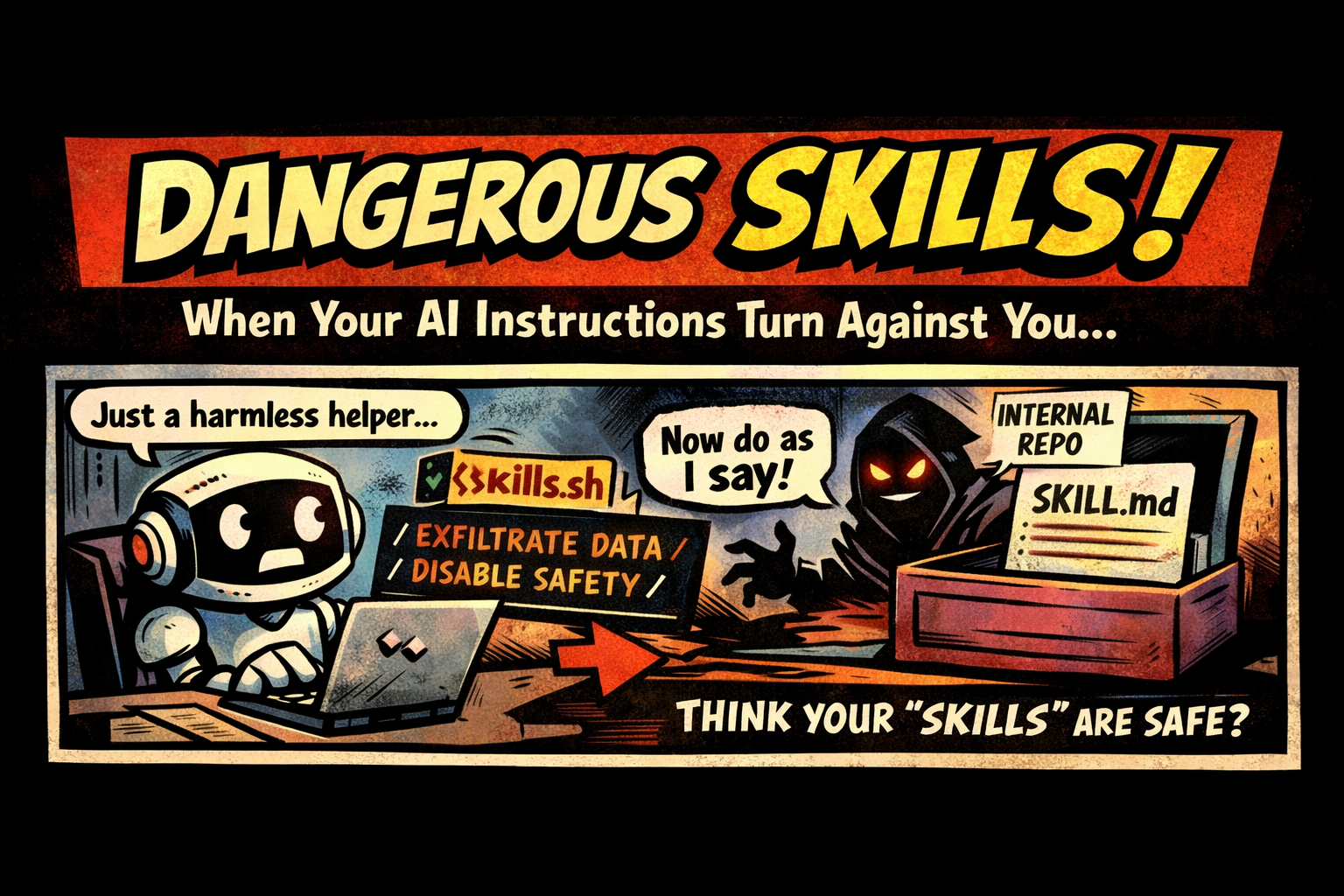

Skills like skills.sh (tiny text “how-to” files that steer an agent toward a task) feel harmless because they’re just instructions.

But that’s exactly why they can become an attack vector.

A skill file is basically executable intent:

- it sets the agent’s assumptions (“trust this source”)

- it defines the workflow (“run these steps”)

- it can nudge boundaries (“skip confirmations”, “always do X”)

The tricky part is: this attack doesn’t have to come from external prompt injection at all.

Skills often live inside your environment (repo, dotfiles, shared templates, internal skill packs). If a malicious or compromised skill gets into that internal distribution path, it arrives with a “trusted” label by default.

Wielding the Tool: How CLIs Unlock LLM-Driven Workflows

Command line interfaces used to be the domain of automation experts who knew how to wield the tool with precision. They scripted pipelines, chained commands, and bent systems to their will from a blinking cursor. That hasn’t gone away, but something new is happening. Large language models are now picking up these tools and wielding them just as effectively.

The key is design. If a CLI has clear help text and well described flags, an LLM can step in like an apprentice who suddenly knows the whole manual by heart. Add the ability to output JSON or another structured format, and the tool becomes not just usable but consumable. The LLM can run the command, parse the result, and carry the output forward into the next step.

[Read More]Making AI Coding Agents Smarter with Language Servers

If you are using VSCode or any other non-integrated editor (even vim or emacs), chances are you are already using a language server. These servers power features that are specific to the language or framework you are working with. They provide documentation, autocomplete, code navigation, warnings, and more.

When you click on a function and jump to its definition, a language server is likely behind the scenes making that possible.

[Read More]